Robotics Platform

The Rainbow research group exploit a robotics platform consisting of 5 trays (vision robotics, indoor mobile robotics, advanced manipulation robotics, indoor unmanned aerial vehicles (UAVs) and haptics interfaces for shared control. They allow team members to use reliable robotic systems to validate their researches in visual servoing, visual tracking, active perception and shared control. From a software point of view, all the materials are interfaced with ViSP an open source software platform we develop in the team, but also with ROS-1 and ROS-2 frameworks.

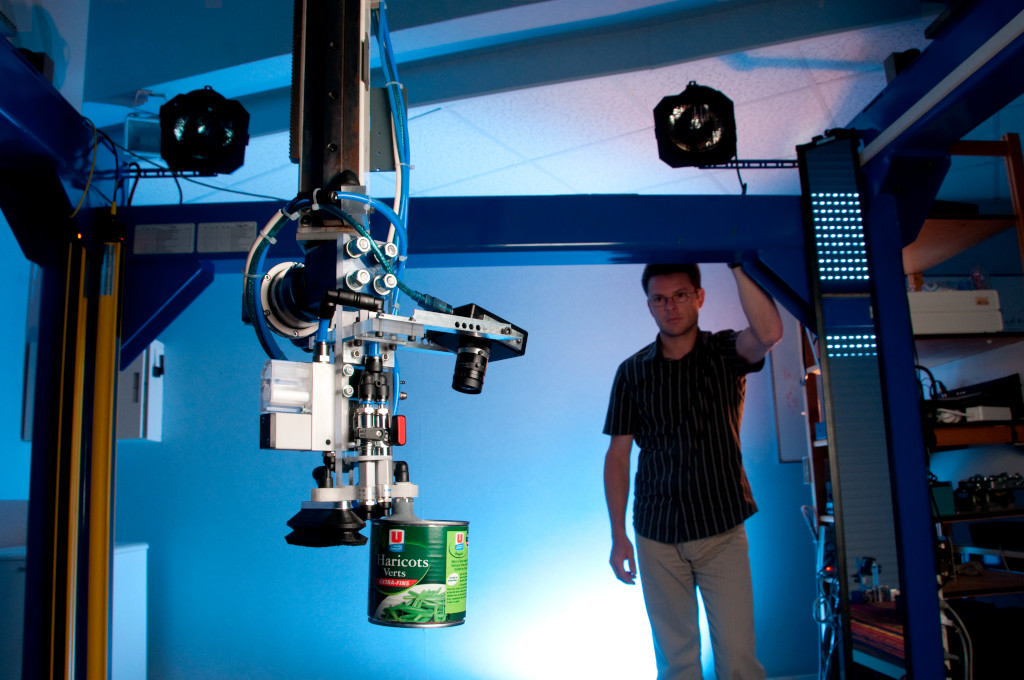

Vision Robotics

We operate an industrial robots to validate our research in visual servoing and active vision. This robot is a 6 DoF Gantry robot built by Afma Robots in the nineties equipped with a collection of various RGB and RGB-D cameras used to validate vision-based real-time tracking algorithm. A gripper can also be mounted on its end-effector.

© Inria / H. Raguet

Indoor Mobile Robotics

For fast prototyping of algorithms in perception, control and autonomous navigation, the team uses a Pioneer 3DX from Adept equipped with various sensors needed for autonomous navigation and sensor-based control.

Moreover, to validate the researches in personally assisted living topic, we have three electric wheelchairs, one from Permobil, one from Sunrise and the last from YouQ. The control of the wheelchair is performed using a plug and play system between the joystick and the low level control of the wheelchair. Such a system lets us acquire the user intention through the joystick position and control the wheelchair by applying corrections to its motion. The wheelchairs have been fitted with telemeters to perform the required servoing for assisting handicapped people. In 2019, we bought a wheelchair haptic simulator to develop new human interaction strategies in an virtual reality environment.

© Inria / H. Raguet, C. Morel

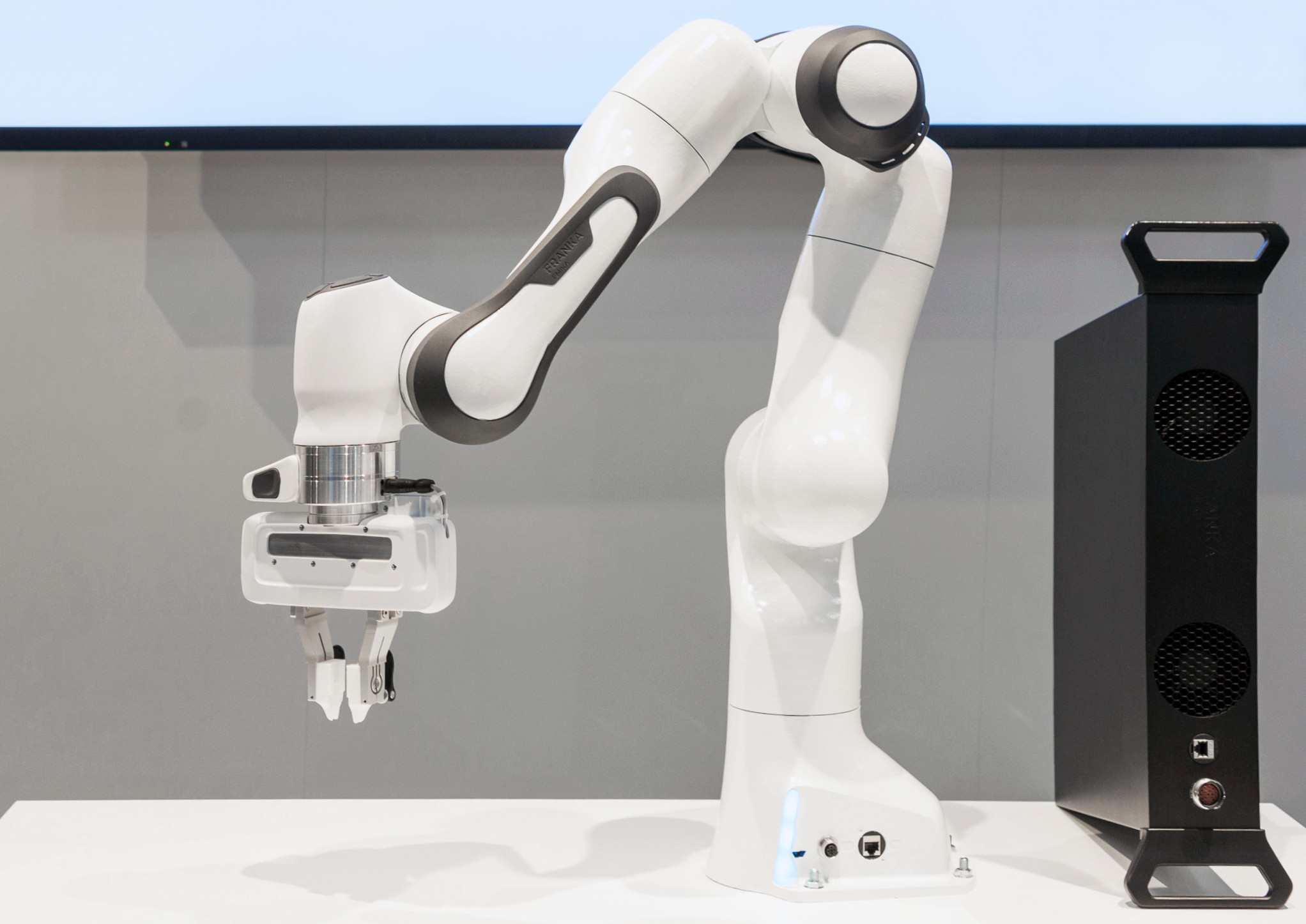

Advanced Manipulation Robotics

This platform is composed by two 6 DoF Adept Viper arms equipped with force/torque ATI sensors, one UR5 from Universal Robots, two Panda lightweight arms from Franka Emika equipped with torque sensors in all seven axes. All these robots can embed a RGB or RGB-D camera attached to the arm end-effector. Moreover an electric 2 fingers parallel gripper, a Reflex TakkTile 2 gripper from RightHand Labs, or a soft hand from qbrobotics can be mounted on the Panda arms end-effector to validate our researches in coupling force and vision for controlling robot manipulators and used in a shared control scheme for remote manipulation. A 6-dof force/torque sensor from Alberobotics can also be mounted on the Panda end-effector to get more precision during torque control.

© Inria / H. Raguet

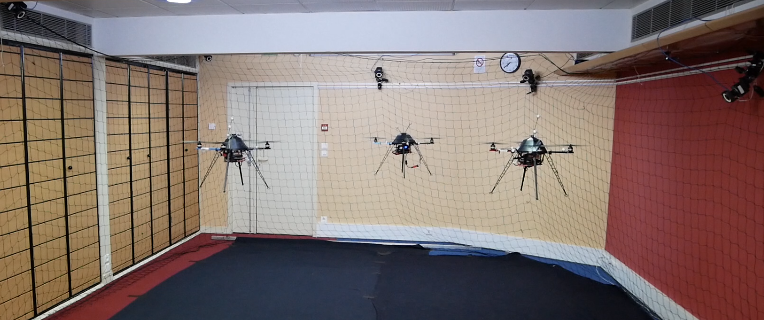

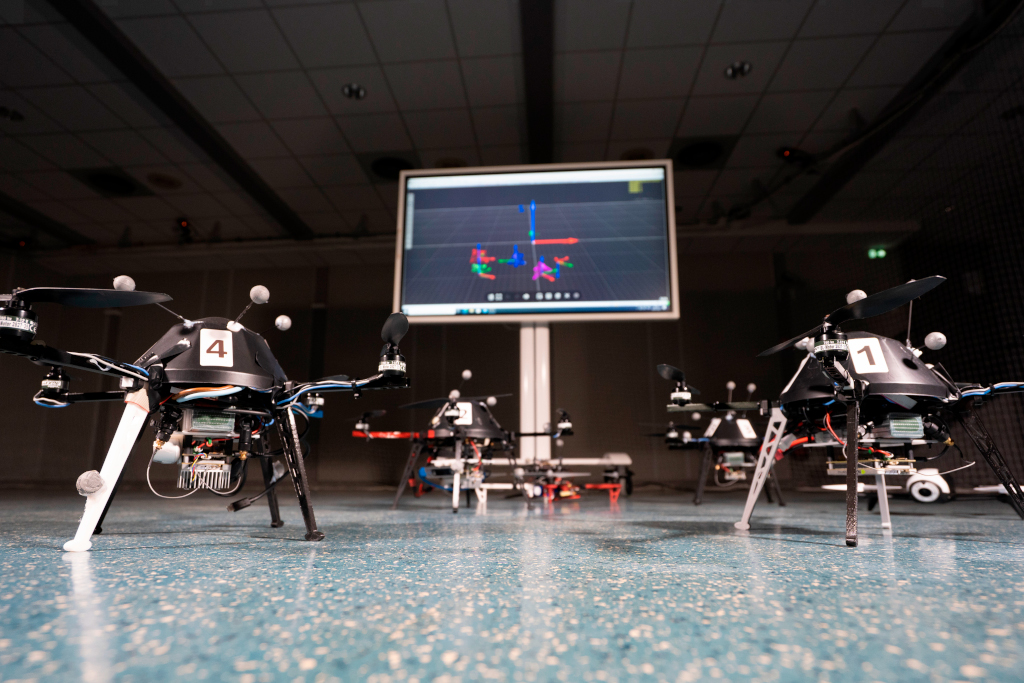

Indoor Unmanned Aerial Vehicles

To develop research activities involving perception and control for single and multiple quadrotor UAVs we exploit four quadrotors from Mikrokopter Gmbh, and one DJI F450 controlled using a Pixhawk. They have been heavily customized equipping each quadrotor with a NVIDIA Jetson TX2 board running Linux Ubuntu and a RGB-D camera that can serve for visual-serving or visual odometry purpose. The Mikrokopter quadrotors are interfaced with the TeleKyb-3 software based on genom3 framework developed at LAAS in Toulouse (the middleware used for managing the experiment flows and the communication among the UAVs and the base station). For experimentations we can use a small flying arena equipped with the Vicon Motion Capture system, or we can also use a larger arena equipped with a Qualisys Motion Capture system. The quadrotor group is used as robotic platforms for testing a number of single and multiple flight control schemes with a special attention on the use of onboard vision as main sensory modality.

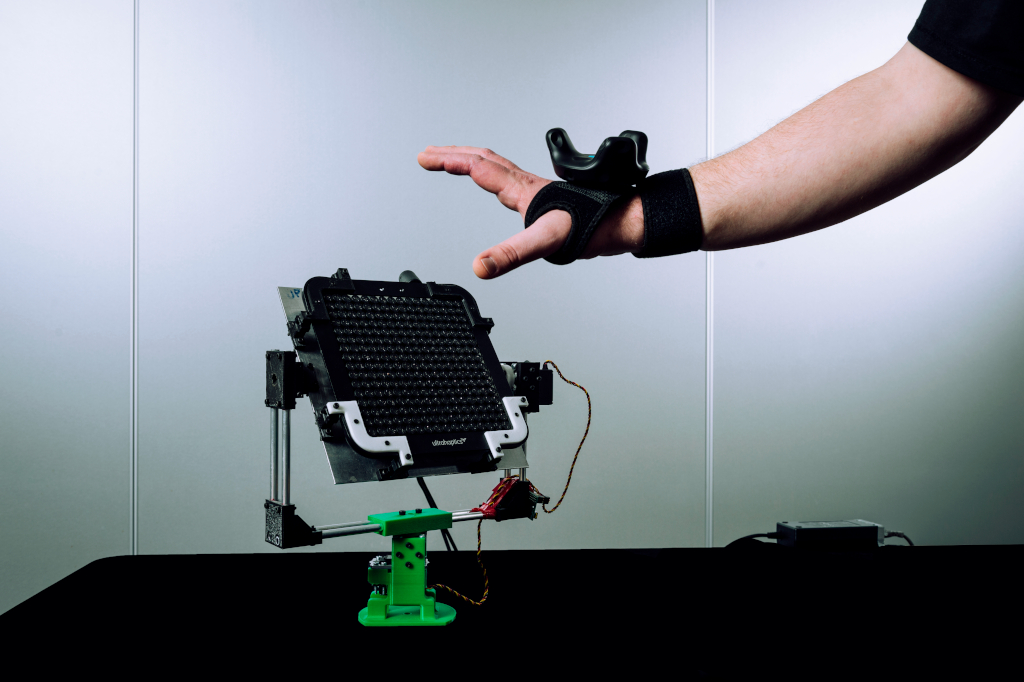

Haptics and Shared Control

Various haptic devices are used to validate our research in shared control. We have a Virtuose 6D device from Haption. This device is used as master device in many of our shared control activities. An Omega 6 from Force Dimension and devices in loan from Ultrahaptics complete this platform that could be coupled to the other robotic platforms.