Israel D. Gebru, Sileye Ba, Georgios Evangelidis and Radu Horaud

International Conference on Latent Variable Analysis and Independent Component Analysis, 2015 (pdf)

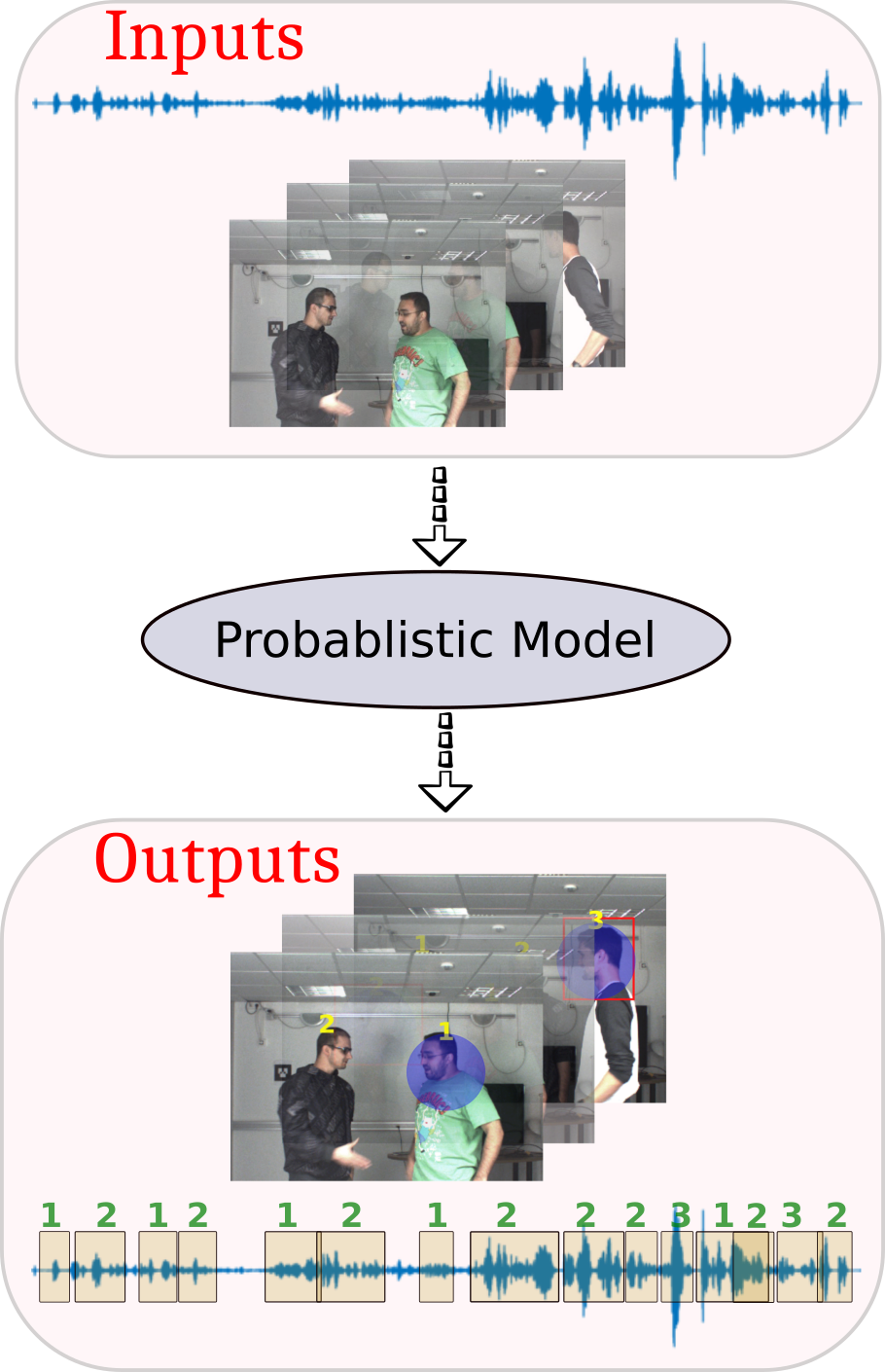

Speaker diarization is an important component of multi-party dialog systems in order to assign speech-signal segments among participants. Diarization may well be viewed as the problem of detecting and tracking speech turns. It is proposed to address this problem by modeling the spatial coincidence of visual and auditory observations and by combining this coincidence model with a dynamic Bayesian formulation that tracks the identity of the active speaker. Speech-turn tracking is formulated as a latent-variable temporal graphical model and an exact inference algorithm is proposed. We describe in detail an audiovisual discriminative observation model as well as a state-transition model. We also describe an implementation of a full system composed of multi-person visual tracking, sound-source localization and the proposed online diarization technique. Finally we show that the proposed method yields promising results with two challenging scenarios that were carefully recorded and annotated.

Speaker diarization is an important component of multi-party dialog systems in order to assign speech-signal segments among participants. Diarization may well be viewed as the problem of detecting and tracking speech turns. It is proposed to address this problem by modeling the spatial coincidence of visual and auditory observations and by combining this coincidence model with a dynamic Bayesian formulation that tracks the identity of the active speaker. Speech-turn tracking is formulated as a latent-variable temporal graphical model and an exact inference algorithm is proposed. We describe in detail an audiovisual discriminative observation model as well as a state-transition model. We also describe an implementation of a full system composed of multi-person visual tracking, sound-source localization and the proposed online diarization technique. Finally we show that the proposed method yields promising results with two challenging scenarios that were carefully recorded and annotated.

Below are results on audio-visual recordings from AVTrack-1 dataset.

Some of Our Related Papers

[1] Gebru, I. D., Alameda-Pineda, X., Horaud, R., & Forbes, F., Audio-visual speaker localization via weighted clustering. In IEEE International Workshop on Machine Learning for Signal Processing, MLSP 2014. Research Page

[2] Gebru, I. D., Silèye Ba, Georgios Evangelidis and Radu Horaud. Tracking the Active Speaker Based on a Joint Audio-Visual Observation Model. In ICCV 2015 workshop on 3D Reconstruction and Understanding with Video and Sound, 2015. Research Page

[3] Gebru, I. D., Alameda-Pineda, X., Forbes, F., & Horaud, R., EM algorithms for weighted-data clustering with application to audio-visual scene analysis. arXiv preprint arXiv:1509.01509. Research Page

Acknowledgements

This research has received funding from the EU-FP7 STREP project EARS (#609465) and ERC Advanced Grant VHIA (#340113).