Co-Localization of Audio Sources in Images Using Binaural Features and Locally-Linear Regression

Antoine Deleforge, Radu Horaud, Yoav Y. Schechner and Laurent Girin.

IEEE/ACM Transactions on Audio, Speech and Language Processing, 23(4), 718-731, April 2015

Abstract | Videos | Dataset | Matlab code | pdf from HAL | IEEE Xplore | Bibtex

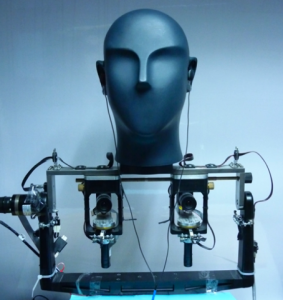

Abstract. The paper addresses the problem of localizing audio sources using binaural measurements. We propose a supervised formulation that simultaneously localizes multiple sources at different locations. The approach is intrinsically efficient because, contrary to prior work, it relies neither on source separation, nor on monaural segregation. The method starts with a training stage that establishes a locally-linear Gaussian regression model between the directional coordinates of all the sources and the auditory features extracted from binaural measurements. While fixed-length wide-spectrum sounds (white noise) are used for training to reliably estimate the model parameters, we show that the testing (localization) can be extended to variable-length sparse-spectrum sounds (such as speech), thus enabling a wide range of realistic applications, such as mapping sounds onto images.

Code

All the results presented in the paper and in the videos below can be reproduced using the Supervised Binaural Mapping Matlab Toolbox.

Data

Results presented in the paper are based on the publicly available audio-visual AVASM dataset.

Companion Videos (Go to Youtube Playlist)

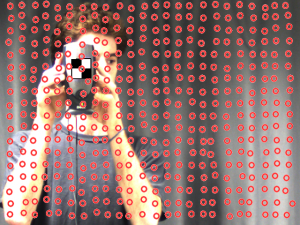

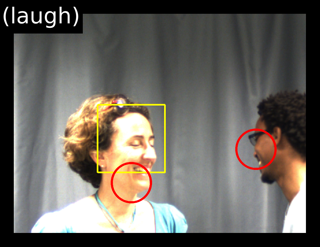

The following videos demonstrate the efficiency of the method for mapping real audio sources onto the field of view of a camera. They complement figures 4, 5, 7 and 8 of the paper.

-

Single speaker, single-source mapping (Figure 4)

-

Single speaker, single-source mapping: robustness to change of position in the room (Figure 5)

-

Two speakers, two-source mapping (Figure 7)

-

Single speaker, two-source mapping (Figure 8)