Tracking Gaze and Visual Focus of Attention of People Involved in Social Interaction

Benoit Massé, Silèye Ba and Radu Horaud

IEEE Transactions on Pattern Analysis and Machine Intelligence, 40(11), 2711-2724, 2018 | IEEEXplore | arXiv | HAL | Code | Demo | Datasets

The visual focus of attention (VFOA) has been recognized as a prominent conversational cue. We are interested in estimating and tracking the VFOAs associated with multi-party social interactions. We note that in this type of situations the participants either look at each other or at an object of interest; therefore their eyes are not always visible. Consequently both gaze and VFOA estimation cannot be based on eye detection and tracking. We propose a method that exploits the correlation between gaze and head orientation. Both VFOA and gaze are modeled as latent variables in a Bayesian switching linear dynamic framework. The proposed formulation leads to a tractable learning procedure and to an efficient algorithm that simultaneously tracks gaze and VFOA. The method is tested and benchmarked using two publicly available datasets (Vernissage and LAEO) that contain typical multi-party human-robot and human-human interaction scenarios.

The visual focus of attention (VFOA) has been recognized as a prominent conversational cue. We are interested in estimating and tracking the VFOAs associated with multi-party social interactions. We note that in this type of situations the participants either look at each other or at an object of interest; therefore their eyes are not always visible. Consequently both gaze and VFOA estimation cannot be based on eye detection and tracking. We propose a method that exploits the correlation between gaze and head orientation. Both VFOA and gaze are modeled as latent variables in a Bayesian switching linear dynamic framework. The proposed formulation leads to a tractable learning procedure and to an efficient algorithm that simultaneously tracks gaze and VFOA. The method is tested and benchmarked using two publicly available datasets (Vernissage and LAEO) that contain typical multi-party human-robot and human-human interaction scenarios.

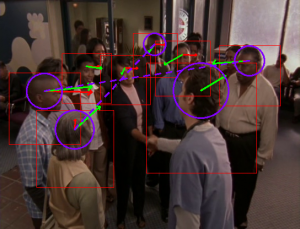

The two images below are extracted from the Vernissage dataset. They illustrate the principle of the method. Two persons (left-person and right-person) interact with a robot. While the robot speaks, the two persons turn their visual attention (gaze) towards the robot (left image). When right-person speaks, left-person turns her head to gaze to the speaker. The algorithm estimates eye gaze (G) and visual focus (dashed blue line) from head pose (H). The learning and inferring methods are based on a switching state-space dynamic model formulation (a switching Kalman filter): eye gaze and visual focus are estimated from head pose and from a set of objects that are located throughout the scene.

The Vernissage dataset

The LAEO dataset (Looking At Each Other)

Additionnal paper

Benoit Massé, Silèye Ba, Radu Horaud. Simultaneous Estimation of Gaze Direction and Visual Focus of Attention for Multi-Person-to-Robot Interaction. International Conference on Multimedia and Expo, Jul 2016, Seattle, United States. pp.1-6, 2016.