Continuous Action Recognition Based on Sequence Alignment

Kaustubh Kulkarni, Georgios Evangelidis, Jan Cech and Radu Horaud

International Journal of Computer Vision (online) vol. 112, issue 1, March 2015, pp. 90-114

PDF on arXiv | BibTeX:  | PDF from HAL | Matlab code | Additional Papers | Videos

| PDF from HAL | Matlab code | Additional Papers | Videos

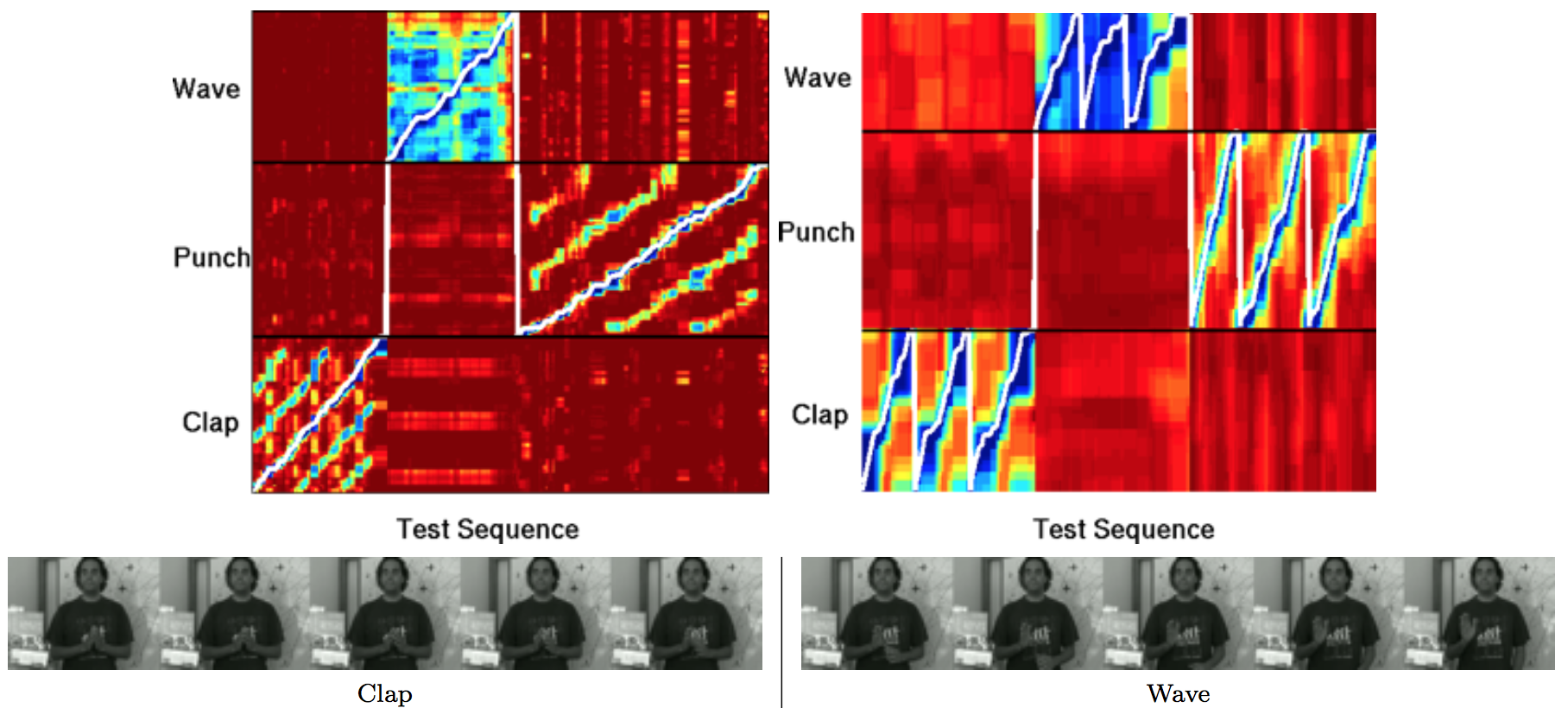

Abstract: Continuous action recognition is more challenging than isolated recognition because classification and segmentation must be simultaneously carried out. We build on the well known dynamic time warping (DTW) framework and devise a novel visual alignment technique, namely dynamic frame warping (DFW), which performs isolated recognition based on per-frame representation of videos, and on aligning a test sequence with a model sequence. Moreover, we propose two extensions which enable to perform recognition concomitant with segmentation, namely one-pass DFW and two-pass DFW. These two methods have their roots in the domain of continuous recognition of speech and, to the best of our knowledge, their extension to continuous visual action recognition has been overlooked. We test and illustrate the proposed techniques with a recently released dataset (RAVEL) and with two public-domain datasets widely used in action recognition (Hollywood-1 and Hollywood-2). We also compare the performances of the proposed isolated and continuous recognition algorithms with several recently published methods.

Videos: These videos show examples of continuous action recognition based on the one-pass dynamic frame warping (OP-DFW) method described in the paper. The video onto the right is the test sequence and the video onto the left shows frames selected from the training dataset by the proposed sequence alignment method.

Example of continuous recognition of “clap”, “punch”, and “wave” gestures.

.

Examples of continuous recognition from the Holywood dataset.

Examples of continuous recognition from the RAVEL dataset