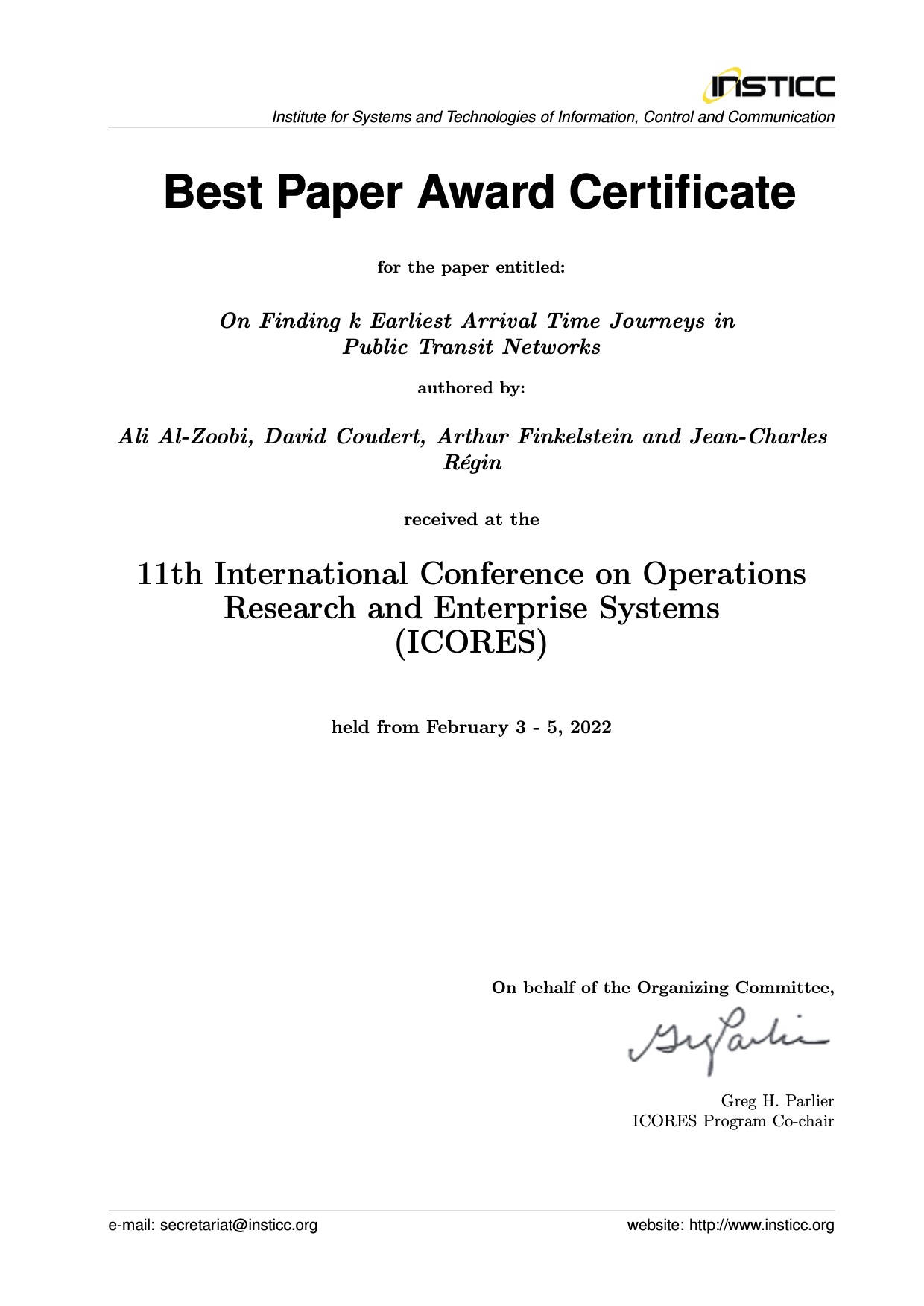

The paper On Finding k Earliest Arrival Time Journeys in Public Transit Networks [1] won the best paper award at ICORES 2022.

Congratulation to the authors!

The source code used to conduct experiments for this paper is here.

- A. Al-Zoobi, D. Coudert, A. Finkelstein, and J. Régin, “On Finding k Earliest Arrival Time Journeys in Public Transit Networks,” in 11th International Conference on Operations Research and Enterprise Systems (ICORES), Virtual event, France, 2022, pp. 314-325.

[BibTeX] [Download PDF]@inproceedings{alzoobi:hal-03559992, TITLE = {{On Finding k Earliest Arrival Time Journeys in Public Transit Networks}}, AUTHOR = {Al-Zoobi, Ali and Coudert, David and Finkelstein, Arthur and R{\'e}gin, Jean-Charles}, URL = {https://hal.inria.fr/hal-03559992}, BOOKTITLE = {{11th International Conference on Operations Research and Enterprise Systems (ICORES)}}, ADDRESS = {Virtual event, France}, PAGES = {314-325}, YEAR = {2022}, MONTH = Feb, KEYWORDS = {Public Transit Routing ; shortest path ; dissimilar paths}, PDF = {https://hal.inria.fr/hal-03559992/file/ptkssp.pdf}, HAL_ID = {hal-03559992}, HAL_VERSION = {v1}, }