Third (Virtual) Workshop of the HPDaSc project

10 December 2020

10h-13h00 Rio de Janeiro, 14h-17h00 Montpellier

Workshop objective : discuss new opportunities for collaborative research.

Workshop program

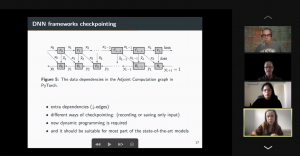

10:00 – 10:30 / 14:00 – 14:30 Title: Optimal Re-Materialization Strategies for Heterogeneous Chains: How to Train Deep Neural Networks with Limited Memory, Alena Shilova, Olivier Beaumont, Lionel Eyraud-Dubois, Julien Herrmann, Alexis Joly

Abstract: We introduce a new activation re-materialization strategy, which allows significantly decreasing memory usage when training Deep Neural Networks with the back-propagation algorithm. Similarly to checkpointing techniques coming from the literature on Automatic Differentiation, it consists in dynamically selecting the forward activations that are saved (materialized) or deleted during the training process, and then automatically recomputing missing activations from those previously materialized. We propose an original computation model that combines two types of activation savings: either only storing the layer inputs, or recording the complete history of operations that produced the outputs. We prove that classical techniques from Automatic Differentiation literature do not apply in the fully heterogeneous case, since the classical assumption of memory persistence of materialized activations does not hold true anymore. We nevertheless provide an algorithm to compute the optimal computation sequence.

10:30 -11:00 / 14:30 – 15:00 Title: Running PINNs on the Grid5000: First Experiences, Romulo Silva, Debora Pina, Liliane Kunstmann, Daniel de Oliveira, Patrick Valduriez, Alvaro Coutinho, Marta Mattoso

Abstract: The growing popularity of Neural Networks in scientific computing presents several challenges in configuring training parameters and validating the models. In mathematical problems, machine learning as a whole has been used to approximate computational costly problems, discover equations by coefficient estimation, and for building surrogates. Those applications are outside of the common usage of neural networks, and require a different set of techniques generally encompassed by Physics-Informed Neural Networks (PINNs), which appear to be a good alternative for solving forward and inverse problems governed by PDEs in a small data regime, specially when it comes to Uncertainty Quantification. PINNs have been successfully applied for solving problems in fluid dynamics, inference of hydraulic conductivity, velocity inversion, phase separation and many others, but we still need to investigate its computational aspects especially about its scalability when running in large-scale systems. Although there are hyperparameter setting recommendations, their fine-tuning is still required. In PINNs, this fine-tuning requires not only analyzing configurations but also how they relate to the loss function evaluation. We propose data provenance capture and analyses techniques to improve the model training of PINNs. We also report our first attempts to run PINNs in Grid5000.

11:00 -11:30 / 15:00 – 15:30 Title: Inferring phylogenetic networks from ancestral genes to regression trees and their use for trait evolution, Rafael de Souza Terra, Kary Ocana

Abstract: Biological data provide detailed insights into the evolutionary history of species, traditionally represented as phylogenetic trees. Many evolutionary processes involve the transfer of genetic information, including vertical descent from parent to offspring, but also including so-called horizontal transfer, such as occurs via hybridization, recombination, introgression, gene transfer, and genome fusion. Networks are used for comparative analysis of continuous traits to describe various evolutionary events since all of these processes have presumably been occurring continuously throughout the history of life. So the phylogeny of life has been more or less network-like in different parts, depending on the balance between vertical and horizontal descent. Several open-source packages contribute to network estimation, manipulation and plotting, notably PhyloNet, BEAST2, TreeMix, Dendroscope, Galaxy platform, and PhyloNetworks. There are at least five heuristic uses of affinity networks in phylogenetics we considered at the modeling process: exploratory data analysis, displaying data patterns, displaying data conflicts, analyzing results, and testing phylogenetic hypotheses. Based on that idea, we have just used genes, trees, networks, and thought processes of phylogeneticists as a metaphor to model a network-based framework integrating python/R scripting libraries. We illustrate these results by classifying viruses with a classifier that we trained on simulated trees from different phylogeny networks. Next steps involve multiomic-data integration enriched with pathway networks to identify key transcriptional regulators and RNA binding proteins.

11:30-12:00 / 15:30 – 16:00 Break (break out rooms)

12:00 – 12:30 / 16:00 – 16:30 Title: DJEnsemble : On the Selection of a Disjoint Ensemble of Deep Learning Black-Box Spatio-Temporal Models , Yania Souto, Rafael Pereira, Anderson Chaves, Rocio Zorrilla, Brian Tsan, Florin Rusu, Eduardo Ogasawara, Artur Ziviani, Fabio Porto

Abstract: Consider a set of black-box models — each of them independently trained on a different dataset — answering the same predictive spatio-temporal query. Being built in isolation, each model traverses its own life-cycle until it is deployed to production. As such, these competitive models learn data patterns from different datasets and face independent hyper-parameter tuning. In order to answer the query, the set of black-box predictors has to be ensembled and allocated to the spatio-temporal query region. However, computing an optimal ensemble is a complex task that involves selecting the appropriate models and defining an effective allocation function that maps the models to the query region. In this presentation, we present a cost-based approach for the automatic selection and allocation of a disjoint ensemble of black-box predictors to answer predictive spatio-temporal queries.

12:30 – 12:45 / 16:30 – 16:45 – Closing

Participants:

Zenith : Esther Pacitti, Patrick Valduriez, Alexis Joly, Florent Masseglia, Reza Akbarinia, Christophe Pradal, Baldwin Dumontier, Oleksandra Levchenko, Jean-Christophe Lombardo, Ji Liu (Baidu Research). PhD students: Gaetan Heidsieck, Benjamin Deneu, Lamia Djebour, Daniel Rosendo (Kerdata team), Alena Shilova (Cepage team).

LNCC : Fabio Porto, Kary Ocaña, Luiz Gadelha. PhD students: Anderson Chaves, Maria Luiza Modelli. MSc students: Rafael de Souza Terra, Rafael Pereira.

COPPE/UFRJ : Marta Mattoso, Alvaro Coutinho. PhD students: Debora Pina, Liliane Kunstmann Neves, Gabriel Barros, Romulo Silva, Renan Souza (PosDoc).

UFF: Daniel de Oliveira, Aline Paes,Yuri Frota. PhD students: Marcello Willians Messina, Carlos Gracioli, Raama Costa, Luiz Gustavo Dias, Maria Luiza Falci.

CEFET-RJ : Eduardo Ogasawara, Rafaelli Coutinho. PhD students: Heraldo Borges, Rebecca Salles, Lais Baroni. Master student: Antonio Castro Jr.