Le prochain Mokameeting aura lieu le mercredi 20 janvier 2021 sur Discord à 14h00.

Nous aurons le plaisir d’écouter deux exposés, l’un de Flavier Léger (ENS Paris), l’autre de Mathurin Massias (Université de Gênes).

Exposé de Flavien Léger

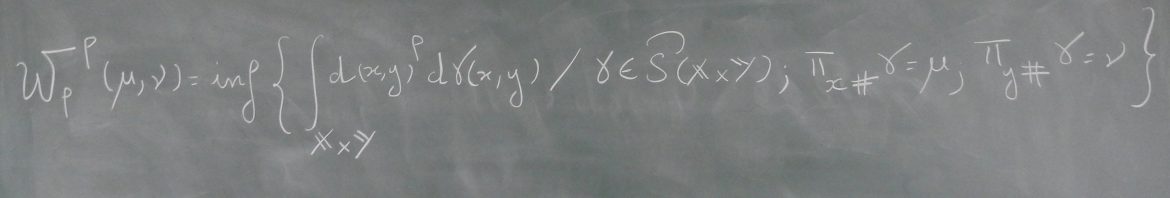

Titre : The back-and-forth method for Wasserstein gradient flows

Résumé : We present a method to efficiently compute Wasserstein gradient flows. Our approach is based on a generalization of the back-and-forth method (BFM) introduced in [Jacobs & Léger, 2020] to solve optimal transport problems. We evolve the gradient flow by solving the dual problem to the JKO scheme. In general, the dual problem is much better behaved than the primal problem. This allows us to efficiently run large scale gradient flows simulations for a large class of internal energies including singular and non-convex energies.

Exposé de Mathurin Massias

Titre : Fast resolution resolution of structured inverse problems: extrapolation and iterative regularization

Résumé : Abstract: Overparametrization is common in linear inverse problems, which poses the question of stability and uniqueness of the solution. A remedy is to select a specific solution by minimizing a bias functional over all interpolating solutions. This functional is frequently neither smooth nor convex (L1, L2/L1, nuclear norm, TV). In the first part of the talk, we study fast solvers for the so called Tikhonov approach, where the bilevel optimization problem is relaxed into “datafit + regularization” (e.g., the Lasso). We show that, for this type of problems arising in ML, coordinate descent algorithms can be accelerated by Anderson extrapolation, which surpasses full gradient methods and inertial acceleration.

The Tikhonov approach can be costly, as it requires to calibrate the regularization strength over a grid of values, and thus to solve many optimization problems. In the second part of the talk, we present results on iterative regularization: a single optimization problem is solved, and the number of iterations acts as the regularizer. We derive novel results on the early stopped iterates, in the case where the bias is convex but not strongly convex.

The presentation is based on:

– https://arxiv.org/abs/2011.

– https://arxiv.org/abs/2006.

(joint works with Alexandre Gramfort, Joseph Salmon, Samuel Vaiter, Quentin Bertrand, Cesare Molinari, Lorenzo Roscasco and Silvia Villa)