Master Class M2R: Parallel Systems 2017-2018

General Informations

Where and When: These lectures take place from 9:45 to 12:45 every Monday in room H203, or H204. The schedule is also available online here (look for Ensimag, Semester 9, Parallel System). To find the building: campus map.

Credits & Exam: The course gives 6 credits (ECTS). There will be an exam at the end of the year.

Objectives

Today, parallel computing is omnipresent across a large spectrum of computing platforms. At the “microscopic” level, processor cores have used multiple functional units in concurrent and pipelined fashions for years, and multiple-core chips are now commonplace with a trend toward rapidly increasing numbers of cores per chip. At the “macroscopic” level, one can now build clusters of hundreds to thousands of individual (multi-core) computers. Such distributed-memory systems have become mainstream and affordable in the form of commodity clusters. Furthermore, advances in network technology and infrastructures have made it possible to aggregate parallel computing platforms across wide-area networks in so-called “grids.” The popularization of virtualization has allowed to consolidate workload and resource exploitation in “clouds” and raise many energy and efficiency issues.

An efficient exploitation of such platforms requires a deep understanding of both architecture, software and infrastructure mechanisms and of advanced algorithmic principles. The aim of this course is thus twofold. It aims at introducing the main trends and principles in the area of high performance computing infrastructures, illustrated by examples of the current state of the art. It intends to provide a rigorous yet accessible treatment of parallel algorithms, including theoretical models of parallel computation, parallel algorithm design for homogeneous and heterogeneous platforms, complexity and performance analysis, and fundamental notions of scheduling and work-stealing. These notions will always be presented in connection with real applications and platforms.

Homework and Programming Project

You first have to study the course material. The entry point are the slides we use for our classes that are made available on this web page every week). You are also expected to go beyond these slides looking by yourself for extra materials.

You will also have to undertake a parallel programming a and performance analysis project (one by student). We let you choose the topic that you need to validate to us. Just speak to us at the class or send us an email with a short description of what you intend to do. If you have no idea with can propose you a few topics. Just speak to us. You will have to return us a short report about your work and expose your work during the last lectures.

This work will be graded AB,C,D (from best to worst). This mark will be used as bonus to improve (or not) your grade at the final exam.

Project List & Schedule

You will have to make a short presentation of your work: 15 minutes+5 minutes of questions. I will be strict on time. So rehearsal to make sure you focus on important stuff. You will laos have to send me a short report about your work (can be based on your presentation + an additional couple of pages to explain some missing details):

- 11/12: Konstantinos Dogea: Heterogeneous Task Scheduling

- 11/12: Valentin Bartier: Parallel min-max algorithm for the Bin Stretching problem.

- 11/12: Alexis Janon & Benjamin Rousillon: Bcast & AllReduce

- 18/12: Konstantinos Chalas: 3SUM problem

- 18/12: Christopher Ferreira: Influence of thread mapping with Parsec

- 18/12: Frederic Viry : Reachability analysis of continuous system

- 8/01: Enrique Colao: Hyper Quicksort on MPI

- 8/01: Jimmy Rogala: Disktra algorithms (MPI)

- 8/01: Antonio Barreto & Sarah Luis: Parallel Quad-tree for image compression

- 8/01: Othman Touijer: collective com based on radix-k algorithm

- 15/01: Khrystyna Fedyuk and Tiago Madeira : MergePath Algorithm

- 15/01: Malalatiana RANDRIANARIJAONA: Cholesky decomposition

- 15/01: Anthony Papasergio: Graph-X

- 15/01: Raphael Jacquet & Romain NAVARRO : Parallel Huffman coding

- 15/01: Valoroso: Parallel MinMax with MPI

Suggestions for Projects

- The HPX programming language (study the programming paradigm and benchmark a few example codes): http://stellar.cct.lsu.edu/projects/hpx/

- The UPC programming language (study the programming paradigm and benchmark a few example codes): http://upc.lbl.gov

- MergePath Algorithm: a parallel merge algorithm

- Radix-k image compositing: try to implement this algorithm not for image compositing but for implementing an reduce-scatter collective communication http://www.mcs.anl.gov/papers/P1624.pdf

- Graph-X: a Library on top of Spark for parallel graph processing. Use it and benchmark it for implementing some graph algorithms:

- Cilk THE protocol: try to implement a basic version of the work stealing scheduler

Parallel Programming

A non exhaustive list of programming environments you can use for your project:

- Open MPI

- UPC

- Intel Cilk Plus

- Intel TBB

- OpenMP

- Some BigData Deep Learning tools: Spark, Flink, TensorFlow, PyTorch

Where to Run Your Parallel Programs

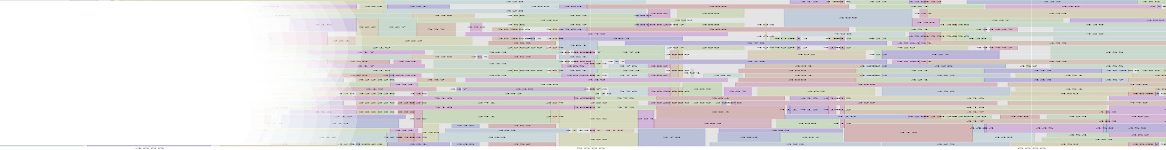

Schedule and Class Materials

- 2/10: Introduction to Parallelism (Arnaud Legrand)

- 9/10: Distributed Memory Programming: the message passing model with MPI (Bruno Raffin)

- 16/10: Distributed Memory Programming: one-sided communications (Bruno Raffin)

- 23/10: Parallel Algorithms (Arnaud Legrand)

- 30/10: No class (Fall break)

- 6/11: Shared Memory Programming (Bruno Raffin)

- 13/11: Parallel Alogrithms (Arnaud Legrand)

- 20/11: No class (rescheduled the 15/01)

- 27/11:Shared Memory Programming (Bruno Raffin)

- 4/12: Scheduling (Arnaud Legrand)

- 11/12: OpenMP (Bruno Raffin)

- 18/12: Big Data Processing (Bruno Raffin):

- Slides from class

- Google Map/Reduce Paper: MapReduce: Simplified Data Processing on Large Clusters

- 8/01: Auto-tuning (Arnaud Legrand)

- 15/01: Parallel Learning (Bruno Raffin)

- Exam between the 24/01 and 31/01

Past Exams

Exams from previous are available here

Internships

All interships are on Pcarre: http://im2ag-pcarre.e.ujf-grenoble.fr/ (search using the name of the responsible to find related internships)

1 Teams working on PDS research topics:

- DATAMOVE: data aware large scale computing https://team.inria.fr/datamove

- CORSE: Compiler Optimizations and Runtime Systems https://team.inria.fr/corse/

- DRAKKAR: Networking and Multimedia https://drakkar.imag.fr

- ERODS: E cient and RObust Distributed Systems http://erods.liglab.fr

- POLARIS : Performance evaluation and Optimization of LARge Infrastructures and Systems. https://team.inria.fr/polaris/

- Plus other teams (e.g., VERIMAG for verification/embedded systems, TIMA for architecture)

2 Internsip

- Intership at Bull: matthieu.perotin@atos.net. Application Specific Fabric Routing (soon on pcarre or contact him directly for more detail)

- Internship at Polaris/Argonne National lab: Grenoble February-June + Argonne National Lab (Chicago) July-August: Guillaume.Huard@imag.fr and swann@anl.gov (Huard on pcarre)

- Internships at Polaris: https://team.inria.fr/polaris/job-offers/

- Internships at DataMove:

- Bruno.Raffin@inria.fr: https://team.inria.fr/datamove/job-offers/ (or raffin on pcarre)

- Denis.Trystram@inria.fr (in collaboration with two different companies) (trystram on pcarre):

Data Management on hybrid Big Data infrastructures: using blockchain technology to compute everywhere.

How fast can you go? Reliable stream processing on a Raspberry Pi cluster

Rendu 3D parallèle avec garanties de performance dans un cloud-HPC

Détection automatique des réunions dans les bâtiments intelligents

Multi-objective resource allocation in hybrid Big Data infrastructures

Towards Data Flow capabilities on a resource and compute management system for Big Data analytics on hybrid infrastractures

Data Privacy management on Hybrid Big Data inftrastructures

- Internship VASCO – Roland Groz (GROZ on pcarre)

A model-based approach for vulnerability detection on industrial control systems

Analysis of traces of computer-assisted surgery

Learning behaviours of systems

Reverse-engineering from binary analysis and grey box inference

- Internship VERMIAG/PACSS David Monniaux (monniaux on pcarre)