This page presents the current and past projects in which the team is or was involved.

2020-2024NEPTUNE

The NEPTUNE (Natation Et Paranatation, Tous Unis pour Nos Elites) Project is integrated into the French national research agency program PIA – Sport de Très Haute Performance aiming at optimizing performances towards the 2024 Olympic and Paralympic Games (OPG). The objective of the NePTUNE project is to provide quantitative tools and methods to french coaches and swimmers in order (1) to evaluate the key performance factors (stroke rate/stroke length ratio, velocity, water resistance, force, gliding efficiency, motor coordination, etc), (2) to identify the different performance profiles in order to individualize training, (3) to perform physical models in order to optimize performance. Supported by the French Federation of Swimming (FFN) and French Federation of Handisport (FFH), a consortium of 6 high schools (ENPC, ENS Rennes, Centrale Lyon, INSA Rouen, INSA Lyon and Ecole Polytechnique), 5 universities (University of Rouen, Rennes, Picardie, Lille and Paris XIII) and National Institute of Sport, Expertise and Performance (INSEP) emerged thanks to Sciences2024 program.

2020-2024H2020 FET OPEN CrowdDNA

CrowdDNA aims to enable a new generation of “crowd technologies”, i.e., a system that can prevent deaths, minimize discomfort and maximize efficiency in the management of crowds. It performs an analysis of crowd behaviour to estimate the characteristics essential to understand its current state and predict its evolution. CrowdDNA is particularly concerned with the dangers and discomforts associated with very high-density crowds such as those that occur at cultural or sporting events or public transport systems. The main idea behind CrowdDNA is that analysis of new kind of macroscopic features of a crowd – such as the apparent motion field that can be efficiently measured in real mass events – can reveal valuable information about its internal structure, provide a precise estimate of a crowd state at the microscopic level, and more importantly, predict its potential to generate dangerous crowd movements. This way of understanding low-level states from high-level observations is similar to that humans can tell a lot about the physical properties of a given object just by looking at it, without touching. CrowdDNA challenges the existing paradigms which rely on simulation technologies to analyse and predict crowds, and also require complex estimations of many features such as density, counting or individual features to calibrate simulations. This vision raises one main scientific challenge, which can be summarized as the need for a deep understanding of the numerical relations between the local – microscopic – scale of crowd behaviours (e.g., contact and pushes at the limb scale) and the global – macroscopic – scale, i.e. the entire crowd.

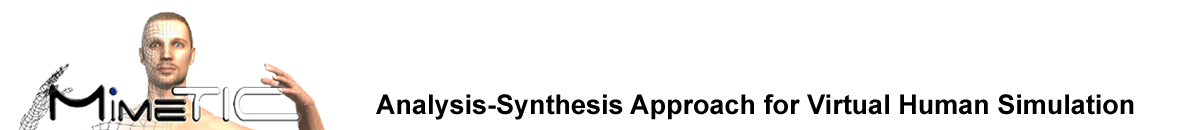

2020-2024ANR CapaCities

Biomechanical cost quantification for wheelchair accessible cities

This project is leaded by Christophe Sauret, from INI/CERAH. The project objective is to build a series of biomechanical indices characterizing the biomechanical difficulty for a wide range of urban environmental situations. These indices will rely on different biomechanical parameters such as proximity to joint limits, forces applied on the handrims, mechanical work, muscle and articular stresses, etc. The definition of a more comprehensive index, called Comprehensive BioMechanical (CBM) cost, including several of the previous indices, will also be a challenging objective. The results of this project would then be used in the first place in VALMOBILE application to assist MWC users in selecting optimal route in Valenciennes agglomeration (project founded by the French National Agency for Urban Renewal and the North Department of France). The MimeTIC team is involved on the musculoskeletal simulation issues and the biomechanical costs definition.

2020-2023H2020 ICT ITN CLIPE(website)

Creating Lively Interactive Populated Environments

CLIPE is an Innovative Training Network (ITN) funded by the Marie Skłodowska-Curie program of the European Commission. The primary objective of CLIPE is to train a generation of innovators and researchers in the field of virtual characters simulation and animation. Advances in technology are pushing towards making VR/AR worlds a daily experience. Whilst virtual characters are an important component of these worlds, bringing them to life and giving them interaction and communication abilities requires highly specialized programming combined with artistic skills, and considerable investments: millions spent on countless coders and designers to develop video-games is a typical example. The research objective of CLIPE is to design the next-generation of VR-ready characters. CLIPE is addressing the most important current aspects of the problem, making the characters capable of: behaving more naturally; interacting with real users sharing a virtual experience with them; being more intuitively and extensively controllable for virtual worlds designers. To meet our objectives, the CLIPE consortium gathers some of the main European actors in the field of VR/AR, computer graphics, computer animation, psychology and perception. CLIPE also extends its partnership to key industrial actors of populated virtual worlds, giving students the ability to explore new application fields and start collaborations beyond academia.

2020-2022SAD Brittany Region – Reactive

The general objective is to create interactive characters endowed with levels of behavioural sensitivity and responsiveness for Virtual Reality applications. By populating virtual environments with such characters, our goal is to achieve new levels of immersive experiences, by reinforcing the feeling of presence through non-verbal communication between a user and one or more agents. A prerequisite for the creation of reactive and expressive virtual humans is to understand how “real” humans interact and react to the behaviours and reactions of others. This is the core of the REACTIVE project. It focuses on the elements of non-verbal communication resulting from body movements.

2020-2022DSR – AUTOMA-PIED 2

AUTOMA-PIED 2 is driven by IFSTTAR. The main objective is to study the role of repeated practice in the development of pedestrians’ knowledge of automated vehicles, their confidence, and the evolution of their street crossing behaviours with experience. The question of the design of the automated vehicle is also addressed. Inter-age comparisons will allow us to investigate the strong road safety issue that concerns older people, their greater occurrence of pedestrian accidents and their specific needs. More generally, this project will allow us to deepen our knowledge of automated pedestrian-vehicle interactions and to feed the discussion and implementation of preventive measures regarding road safety policy and automated vehicle design.

2019-2024ANR JCJC – 3DMOVE

3DMOVE: Learning to synthesize 3D dynamic human motion is led by Stefanie Wuhrer (Inria Grenoble). The main objective of 3DMOVE is to automatically compute high-quality generative models from a database of raw dense 3D motion sequences for human bodies and faces.

2019-2022H2020 ICT-25 RIA PRESENT(website)

This European project aims at creating virtual characters that are realistic in looks and behaviour, and who can act as trustworthy guardians and guides in the interfaces for AR, VR and more traditional forms of media. It is conducted in collaboration with industrial partners The Framestore Ltd, Cubic Motion Ltd, InfoCert Spa, Brainstorm Multimedia S.L., Creative Workers – Creatieve Werkers VZW, and academic partners Universidad Pompeu Fabra and Universität Augsburg.

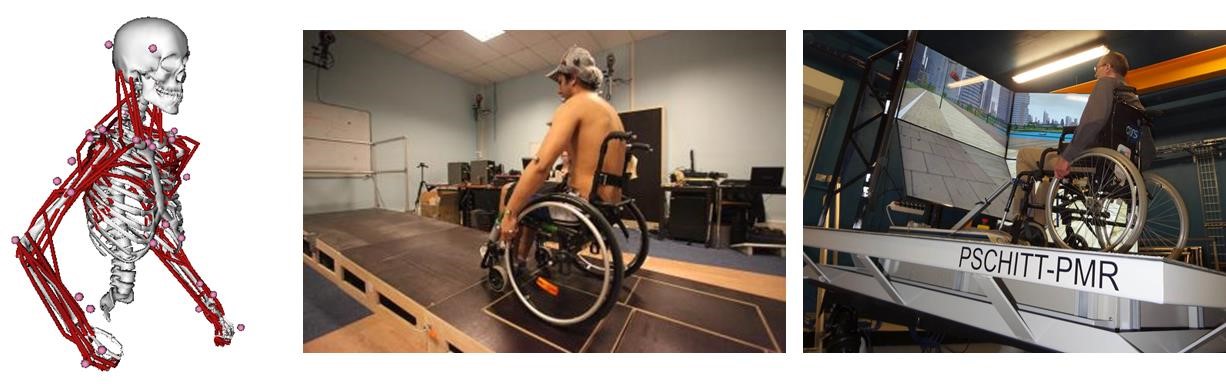

2019-2022ANR HoBis

Exploration of bipedal gaits in Hominins thanks to Specimen-Specific Functional Morphology

The ANR project HoBiS (Hominin Bipedalism, leaded by Museum National d’Histoire Naturelle) is a pluridisciplinary research project (LAAS/CNRS, Univ. Rennes2), fundamental in nature and centred on palaeoanthropological questions related to habitual bipedalism, one of the most striking features of the human lineage. Recent discoveries (up to 7 My) highlight an unexpected diversity of locomotor anatomies in Hominins that lead palaeoanthropologists to hypothesize that habitual bipedal locomotion took distinct shapes through our phylogenetic history. We will develop a work flow and software package to generate plausible anatomical and musculoskeletal models given the available fossil specimen data and what can be hypothesized (e.g. mass distribution, muscle properties) based on other specimens and other species, including living models. For the first time, this will allow to explore the parameter space spanned by our knowledge about fossils and extant animals, enabling palaeoanthropologists to propose hypotheses to reconstruct the whole model and address its anatomical variation.

2019-2021Inria Associate Team – BEAR

BEAR is a collaborative project between France (Inria Rennes) and Canada (Wilfrid Laurier University and Waterloo University), dedicated to the simulation of human behavior during interactions between pedestrians. In this context, the project aims at providing more realistic models and simulation by considering the strong coupling between pedestrians’ visual perception and their locomotor adaptations

2018-2023Inria Research Challenge "Avatar"(website)

The next generation of virtual selves in digital worlds

This project, led by Ludovic Hoyet, aims at designing avatars (i.e., the user’s representation in virtual environments) that are better embodied, more interactive and more social, through improving all the pipeline related to avatars, from acquisition and simulation, to designing novel interaction paradigms and multi-sensory feedback. It involves 6 Inria teams (GraphDeco, Hybrid, Loki, MimeTIC, Morpheo, Potioc), Prof. Mel Slater (Uni. Barcelona), and 2 industrial partners (Technicolor and Faurecia).

2018-2022French ANR JCJC Per²(website)

Perception-based Human Motion Personalisation

The Per2 is a 42 month ANR JCJC project (2018-2022) entitled Perception-based Human Motion Personalisation, led by Ludovic Hoyet. The objective is to focus on how viewers perceive motion variations to automatically produce natural motion personalisation accounting for inter-individual variations. In short, our goal is to automate the creation of motion variations to represent given individuals according to their own characteristics, and to produce natural variations that are perceived and identified as such by users. Challenges addressed in this project consist in (i) understanding and quantifying what makes motions of individuals perceptually different, (ii) synthesising motion variations based on these identified relevant perceptual features, according to given individual characteristics, and (iii) leveraging even further the synthesis of motion variations and to explore their creation for interactive large-scale scenarios where both performance and realism are critical.

2018-2021h2020 JPICH Digital Heritage "SCHEDAR"

Safeguarding the Cultural HEritage of Dance through Augmented Reality

Dance is an integral part of any culture. Recent computing advances have enabled the accurate 3D digitization of human motion. Such systems provide a new means for capturing, preserving and subsequently re-creating ICH which goes far beyond traditional written or imaging approaches. However, 3D motion data is expensive to create and maintain, encompassed semantic information is difficult to extract and formulate, and current software tools to search and visualize this data are too complex for most end-users. SCHEDAR (leaded by Univ. of Cyprus) will provide novel solutions to the three key challenges of archiving, re-using and re-purposing, and ultimately disseminating ICH motion data. Partners are Algolysis Ltd, Univ. of Warwick, Univ. Reims Champagne-Ardennes.

2018-2020DSR – AUTOMA-PIED

AUTOMA-PIED (Quelles interactions véhicules AUTOMAtisés – PIEtons pour Demain) is driven by IFSTTAR. Using a set-up in virtual reality, the first objective of the project aims at comparing pedestrian behaviour (young and older adults) when interacting with traditional or autonomous vehicles in a street crossing scenario. The second objective is to identify postural cues that can predict whether or not the pedestrian is about to cross the street.

2017-2020French/German OpMOPS(website)

OPMoPS (Organized Pedestrian Movement in Public Spaces) is a three-year Research and Innovation project, where partners representing FCS will cooperate with researchers from academic institutions to develop a decision support tool that can help them in both the preparation and crisis management phase of UPMs. The development of this tool will be conditioned by the needs of the FCS, but also by the results of research into the social behaviour of participants and opponents. The latter, as well as the evaluation of legal and ethical issues related to the proposed technical solutions, constitute an important part of the proposed research. Highly controversial group parades or political demonstrations are seen as a major threat to urban security, since the opposed views of participants and opponents can lead to violence or even terrorist attacks. Due to the movement of urban parades and demonstrations (UPM) through a large part of cities, it is particularly difficult for civil security forces (FCS) to guarantee security in these types of urban events without endangering one of the most important indicators of a free society. The specific technical problems to be faced by the Franco-German consortium are: optimisation methods for planning the routes of UPMs, transport to and from the UPM, planning of FCS people and their location, control of the UPMs using fixed and mobile cameras, as well as simulation methods, including their visualisation, with special emphasis on social behaviour. The methods will be applicable to the preparation and organisation of UPMs, as well as to the management of critical situations of UPMs or to deal with unexpected incidents.

2016-2017SAD Brittany Region – Interact

The project looked at how pedestrian interactions are combined. By proposing different approaches based on real and virtual reality experiments, the project aimed at identifying whether the interactions between 1 vs. 2 walkers were processed simultaneously or sequentially.

2014-2017ANR PERCOLATION

PERception-based CrOwd simulaTION

PERCOLATION is a young researcher project funded by the French Research Agency (ANR JCJC), led by Julien Pettré. The purpose of PERCOLATION is to develop next generation crowd simulators by mathematically formulating microscopic simulation models based on perceptual variables (especially visual variables). Agents are equipped with synthetic vision their reaction is simulated considering the pixels they perceive. The goal is to model a perceptual control law as close as possible to the real human’s perception-action loops that drive local interactions.

2013-2017ANR ENTRACTE(website)

Anthropomorphic Action Planning and Understanding

The ANR project ENTRACTE is a collaboration between the Gepetto team in LAAS, Toulouse (head of the project) and the Inria/MimeTIC team. The purpose of the ENTRACTE project is to address the action planning problem, crucial for robots as well as for virtual human avatars, in analyzing human motion at a biomechanical level and in defining from this analysis bio-inspired motor control laws and bio-inspired paradigms for action planning.

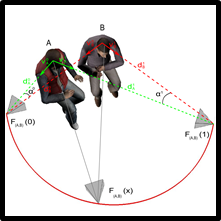

2013-2016Collaboration with Faurecia

Low-cost motion capture system for ergonomic evaluation on site

This Cifre contract with Faurecia group aims at developing new ergonomics assessments based on inaccurate Kinect measurements in manufactures on real workers. In the first part of this project we developed a framework to evaluate the accuracy of the Kinect sensor when used in ergonomic assessment (coll. with Ecole Polytechnique of Montreal). We then proposed an example-based framework to improve the quality of the Kinect data for ergonomics.

2013-Collaboration with Université de Montréal and Imperial College London

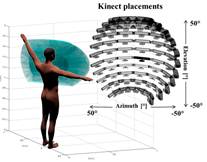

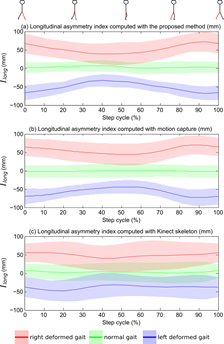

Clinical gait analysis based on Kinect measurements

The aim of this project is to design innovative approaches to monitor patients in clinics with two main illustrations: detection of gait events such as footstrikes, and designing a new asymmetry index, both based on Kinect data. This project is a collaboration with Université de Montréal and Imperial College of London.

2012-2015ANR CINECITTA

Interactive virtual cinematography

Cinecitta is a 3-year young researcher project funded by the French Research Agency (ANR JCJC), led by Marc Christie. The main objective of Cinecitta is to propose and evaluate a novel workflow which mixes user interaction using motion-tracked cameras and automated computation aspects for interactive virtual cinematography that will better support user creativity. We propose a novel cinematographic workflow that features a dynamic collaboration of a creative human filmmaker with an automated virtual camera planner. We expect the process to enhance the filmmaker’s creative potential by enabling very rapid exploration of a wide range of viewpoint suggestions.

2012-2015ANR CHROME

Crowd patches: populating huge interactive environments

The ANR project CHROME is a collaboration between INRIA-Grenoble IMAGINE team, Golaem SAS, Archivideo and the Inria/MimeTIC team Toulouse (head of the project). The purpose of CHROME is to develop new and original techniques to massively populate huge environments. The key idea is to base our approach on the crowd patch paradigm that enables populating environments from sets of pre-computed portions of crowd animation. The question of visual exploration of these complex scenes is also raised in the project.

2012-2015INSEP Virtual Tennis

Perception and action in tennis: pluridisciplinary approach to investigate return of serve

The INSEP project “Perception et action au tennis : une approche pluridisciplinaire centrée sur le retour de service” is a collaboration between the French Tennis Federation, the University of Caen Basse Normandie, the University of Paris Sud 11, the University of Rouen and the University of Rennes2. The purpose of this project is to study the perceptive and motor processes involved in the performance of the return of serve. A multidisciplinary approach is done to analyse the anticipation, reaction, displacement and visuomotor coordination skills in real situation, in laboratory environment and in virtual reality.

2012-2015ANR REPLICA

Rehabilitation program for facial praxia in children with cerebral palsy using an interactive avatar

The ANR TecSan project REPLICA is a collaboration between Dynamixyz, Supelec, Hôpitaux de Saint-Maurice, CRPCC and M2S/MimeTIC team (head of the project). The purpose of REPLICA is to build and test a new rehabilitation program for facial praxia in children with cerebral palsy using an interactive device. The principle is that during a rehabilitation session, the child will observe simultaneously on the same screen an agent, the virtual therapist’s one performing the gesture to be done and a second avatar animated from the motion the child actually perfoms.