IMAGINE was a joint team between Laboratoire Jean Kuntzmann and Inria from January 2011 to June 2020. Since July 2020, IMAGINE is replaced by ANIMA.

With the fast increase of computational power and of memory space, increasingly complex and detailed 3D content is expected for virtual environments. Unfortunately, 3D modeling methodologies did not evolve as fast: while capture of real objects or motion restrict the range of possible content, using standard tools to design each 3D shape, animate them, and manually control camera trajectories is time consuming and entirely leaves the quality of results in the user’s hand. Lastly, procedural generation methods, when applicable, save user’s time but often come at the price of control. We aim to address the challenges brought by the efficient, interactive creation of animated 3D content. To this end, our goal is to develop a new generation of knowledge-based models for shapes, motion, stories and virtual cinematography. These models include both procedural methods, enabling to make the generation of high quality content more efficient, and intuitive control handles, enabling users to easily convey their intent and to progressively refine the result.

These models are used within different environments for interactive content creation, dedicated to specific applications. More precisely, three main research topics are addressed:

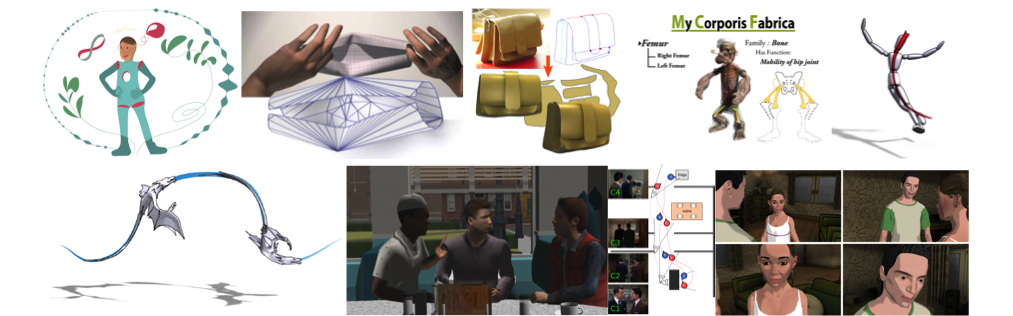

- Shape design: We aim to develop intuitive tools for designing and editing 3D shapes, from arbitrary ones to shapes that obey application-dependent constraints – such as, for instance, being developable for surfaces aimed at representing objects made of cloth or of paper.

- Motion synthesis: Our goal is to ease the interactive generation and control of 3D motion and deformations, in particular by enabling intuitive, coarse to fine design of animations. The applications range from the simulation of passive objects to the control of virtual creatures.

- Narrative design: The aim is to help users to express, refine and convey temporal narrations, from stories to educational or industrial scenarios. We develop both virtual direction tools such as interactive storyboarding frameworks, and high-level models for virtual cinematography, such as rule-based cameras able to automatically follow the ongoing action.

An important feature of our project is to enable the authoring and editing of virtual content, at every stage of the creation process regardless the nature of this content (shapes, motions or stories).

In addition to addressing specific needs of digital artists, this research should in the long term, enable professionals and scientists to represent and interact with models of their objects of study, and educators to quickly express and convey their ideas. We would also like to advance towards the development of a new expressive media, enabling the general public to directly create animated 3D content.

Documents & Presentations

- Activity reports (RAweb):

2017, 2016, 2015, 2014, 2013, 2012 , 2011 - Team presentation (2018): part1 , part2

- Team presentation (2012): slides

| IMAGINE is a team of the Laboratoire Jean Kuntzmann research lab (UMR 5224, between CNRS, Grenoble INP, Inria, UJF, UPMF) and a project of Inria. |