Team STORM

Team STORM is a joint project-team between Inria, the CNRS, University of Bordeaux and Bordeaux-INP. It is hosted at the Inria centre at the university of Bordeaux in Talence, France, and is a member of LaBRI Laboratory.

As emphasized by initiatives such as the European Exascale Software Initiative (EESI), the European Technology Platform for High Performance Computing (ETP4HPC), or the International Exascale Software Initiative (IESP), the HPC community needs new programming APIs and languages for expressing heterogeneous massive parallelism in a way that provides an abstraction of the system architecture and promotes high performance and efficiency. The same conclusion holds for mobile, embedded applications that require performance on heterogeneous systems.

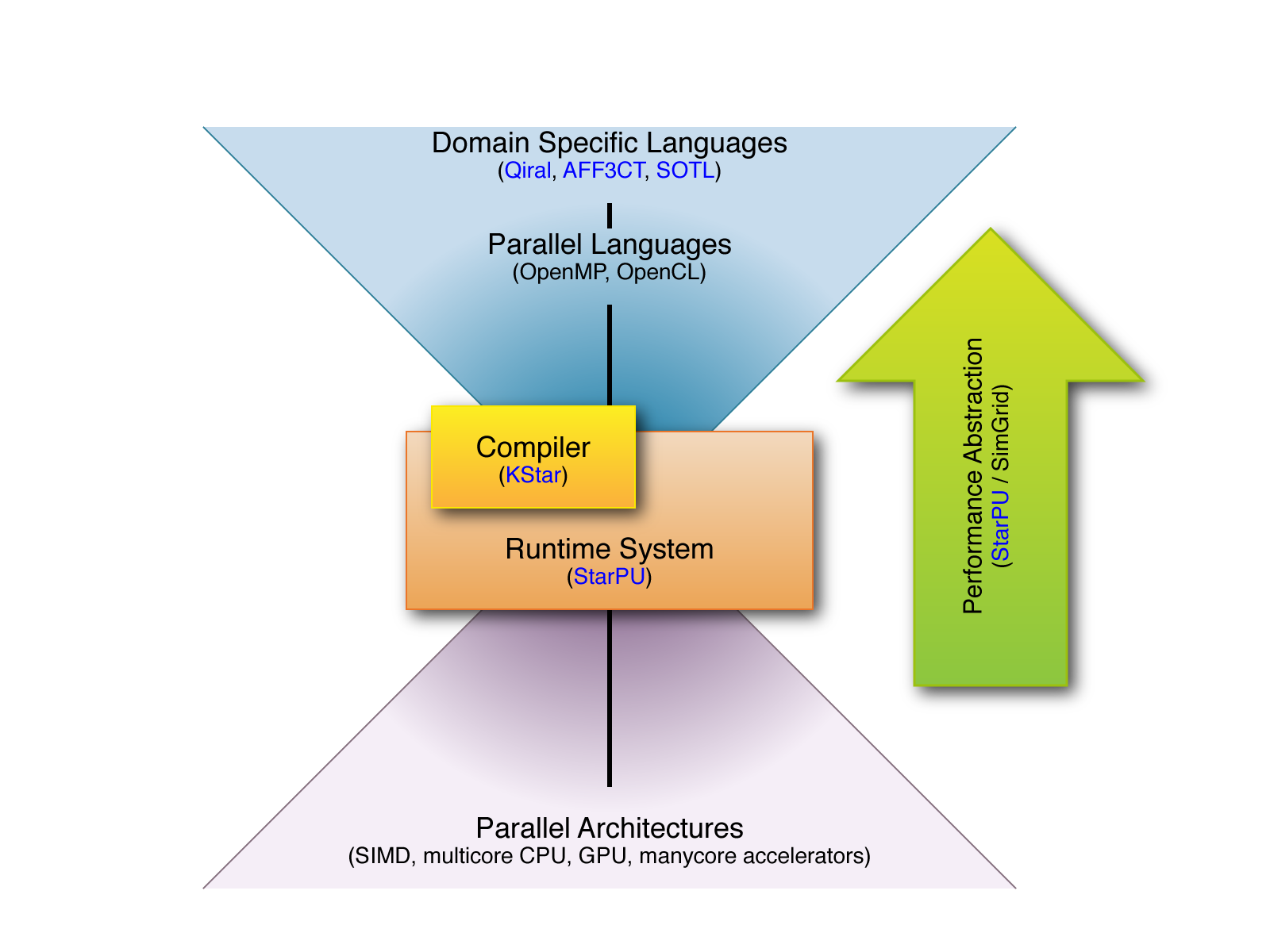

Static Optimizations and Runtime Methods. Team STORM combines strengths on high level DSLs, heterogeneous runtimes and performance analysis tools to help programmers get the highest efficiency from modern computer architectures in a portable manner.

[Link to Team STORM’s 2023 Activity Report]

Research Directions

- High level domain specific languages

- Runtime systems for heterogeneous, manycore platforms

- Analysis and performance feedback tools

Parallelism, from every day devices to HPC

Parallel processor architectures are now used on virtually every computer platform, from smartphones to embedded devices, to high performance computing (HPC) machines. This evolution obviously has an impact on every application, as the potential performance gains open the way to new usages, new scientific progress, and industrial innovations. The counterpart, however, comes as the difficulty to develop algorithms and codes able to efficiently exploit such parallelism.

Fast evolution, challenging complexity

Ever increasing core counts, increasingly dense processor sockets need to be fed with ever increasing amounts of parallel work to perform. Specialized cores (accelerators, big.LITTLE architectures) introduce heterogeneity. Deepening hierarchies of memory layers (cache levels) and hardware parallelism (instructions, vectors, threads) necessitate suitably structured algorithms. Thus, programming modern architectures requires impeding levels of expertise, and the expensive optimizing effort involved can quickly be nullified by the fast hardware evolution pace.

Combining Strengths

For tackling the parallelism complexity challenge, a coordinated set of programming tools and techniques is necessary before (compiler), during (runtime) and after (analysis) program execution. Team STORM aims at combining strengths along these three directions: High level domain specific languages; Runtime systems for heterogeneous, manycore platforms; Analysis and performance feedback tools.

Collaborations

Maelstrom Associate Team (2022 – 2024)

The Maelstrom Inria Associate Team is a joint effort with SIMULA’s HPC Department in Oslo, Norway, on integrating advanced HPC optimization techniques within the FEniCS high level programming environment for Finite Element-based simulations development.