Real-Time Visual Reconstruction by Mixing Multiple Depth and Color Cameras

Summary | Datasets | People | Publications | Videos | MIXCAM Laboratory | MIXCAM Software

|

|

|

|

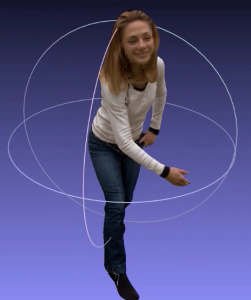

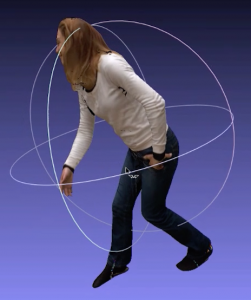

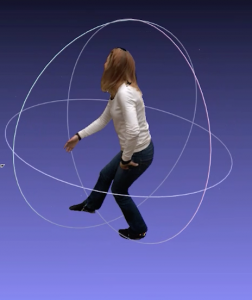

The MIXCAM project (Real-Time Visual Reconstruction by Mixing Multiple Depth and Color Cameras) was funded by France’s Agence Nationale de la Recherche (ANR), programme BLANC. The project started on February 1, 2014 for a period of two years. MIXCAM develops algorithms and real-time software packages for live visualization of 3D color and depth data. The targeted application of MIXCAM is glass-free 3D television. The PERCEPTION team is associated with 4D Views Solutions, a company specialized in integrated hardware/software solutions of 3D visual content.

Humans have an extraordinary ability to see in three dimensions, thanks to their sophisticated binocular vision system. While both biological and computational stereopsis have been thoroughly studied for the last fifty years, the film and TV methodologies and technologies have exclusively used 2D image sequences, including the very recent 3D movie productions that use two image sequences, one for each eye. This state of affairs is due to two fundamental limitations: it is difficult to obtain 3D reconstructions of complex scenes and glass-free multi-view 3D displays, which are likely to need real 3D content, are still under development. The objective of MIXCAM is to develop novel scientific concepts and associated methods and software for producing live 3D content for glass-free multi-view 3D displays. MIXCAM will combine (I) theoretical principles underlying computational stereopsis, (II) multiple-camera reconstruction methodologies, and (iii) active-light sensor technology in order to develop a complete content-production and -visualization methodological pipeline, as well as an associated proof-of-concept demonstrator implemented on a multiple-sensor/multiple-PC platform supporting real-time distributed processing. MIXCAM plans to develop an original approach based on methods that combine color cameras with time-of-flight (TOF) cameras: TOF-stereo robust matching, accurate and efficient 3D reconstruction, realistic photometric rendering, real-time distributed processing, and the development of an advanced mixed-camera platform. The MIXCAM consortium is composed of two French partners (INRIA and 4D View Solutions). The MIXCAM partners will develop scientific software that will be demonstrated using a prototype of a novel platform, developed by 4D Views Solutions, and which will be available at INRIA, thus facilitating scientific and industrial exploitation.

Perception team members involved in MIXCAM

Georgios Evangelidis (PI), Radu Horaud, Miles Hansard, Pierre Arquier, Soraya Arias, Quentin Pelorson.

G. Evangelidis and R. Horaud. Joint Alignment of Multiple Point Sets with Batch and Incremental Expectation-Maximization. IEEE Transactions on Pattern Analysis and Machine Intelligence. 2017. https://arxiv.org/abs/1609.01466.

R.Horaud, G.Evangelidis, M. Hansard and C. Ménier (October 2015). An Overview of Range Scanners and Depth Cameras Based on Time-of-Flight Technologies. Machine Vision and Applications. 27 (7), 1005-102, 2016.

G.D.Evangelidis, M. Hansard, and R. Horaud. Fusion of Range and Stereo Data for High-Resolution Scene-Modeling. IEEE Transactions on Pattern Analysis and Machine Intelligence, 37 (11), 2178 – 2192, 2015.

G. Evangelidis, D. Kounades-Bastian, R. Horaud, and E. Psarakis. A Generative Model for the Joint Registration of Multiple Point Sets. European Conference on Computer Vision (ECCV’14), Zurich, Switzerland, September 2014.

M. Hansard, G. Evangelidis, and R. Horaud. Cross-calibration of Time-of-flight and Colour Cameras. Computer Vision and Image Understanding, special issue on Camera Networks, 134, 105-115, 2015.

M. Hansard, R. Horaud, M. Amat, and G. Evangelidis. Automatic Detection of Calibration Grids in Time-of-Flight Images. Computer Vision and Image Understanding, 121, 108-118, 2014.

| 13 October 2014 | |

| 24 March 2016 |